How to Stop AI Hallucinations in Investment Research

The 3 Secrets Nobody Tells You About Using AI

I asked my subscribers one question:

“What’s your biggest challenge with AI- for investing today?”

55% said the same thing: fear of hallucinations.

Here’s the truth:

You can’t eliminate hallucinations completely.

But you can reduce them by 99,99%.

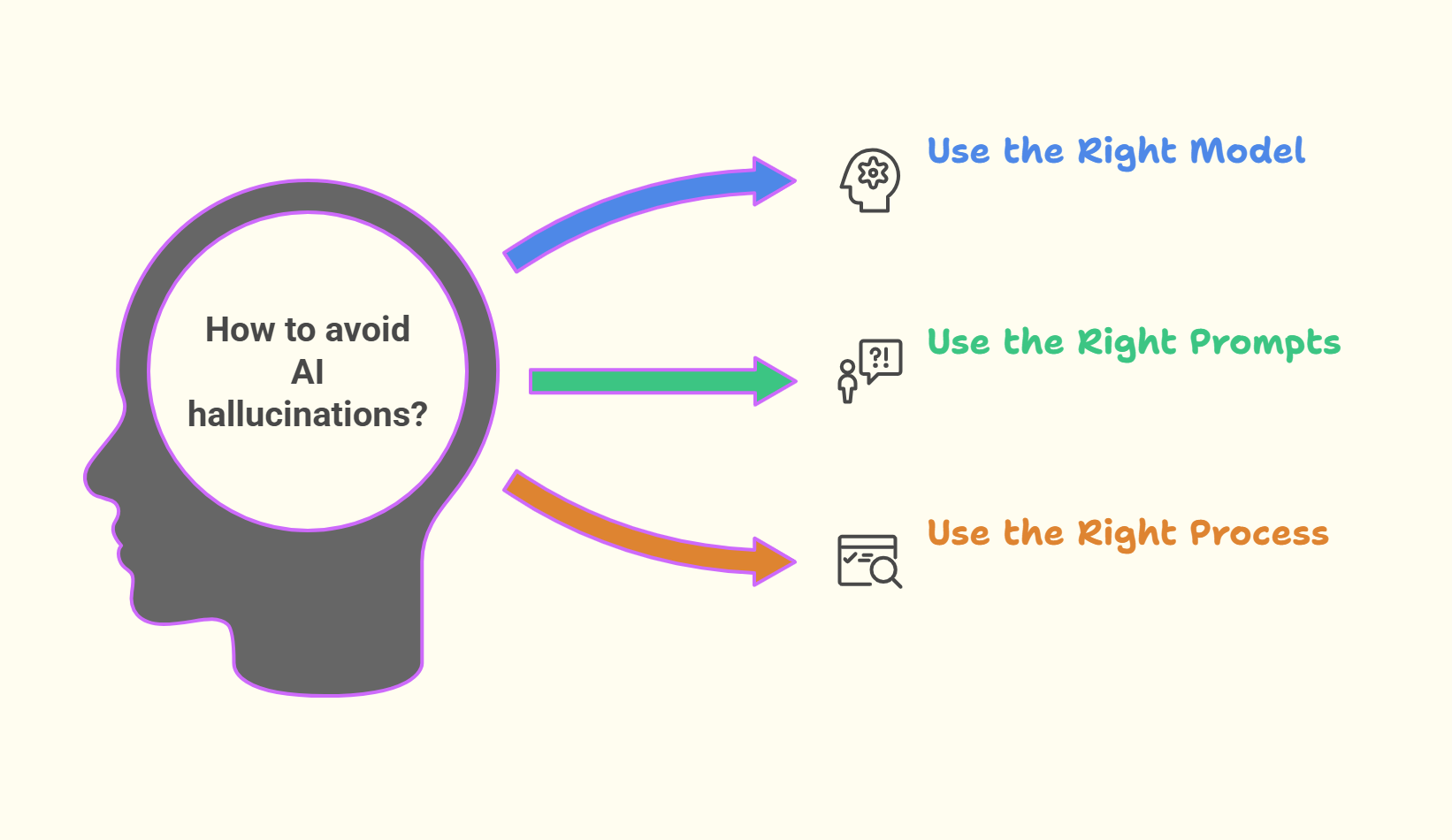

Today, I’ll show you the 3 secrets to have no hallucination in you AI investing process:

Secret #1: Use the Right Model (This alone cuts hallucinations by 70%)

Secret #2: Use the Right Prompts (This constrains what AI can invent)

Secret #3: Use the Right Process (This catches what slips through)

What AI Actually Does (And Why It Hallucinates)

Ideally, AI would work like this:

It checks the annual report

It thinks like an analyst

It runs calculations in the background…

Unfortunately, that’s not what’s happening. Like… not even close.

The real mechanism (simple version)

At its core, an LLM does one thing:

It predicts the next word.

Then the next.

Then the next.

Word by word.

Token by token.

That’s it.

Example: Ask AI about Apple’s pricing power

You type: “Explain Apple’s pricing power”

The LLM does NOT:

Open Apple’s 10-K

Check margins

Verify anything

Instead, it asks internally:

“Given everything I’ve learned about how humans write about businesses, what usually comes next?”

Common patterns it’s seen:

“brand strength”

“ecosystem lock-in”

“switching costs”

“customer loyalty”

So it generates: “Apple has strong pricing power because its brand and ecosystem lock customers in…”

That’s true and it sounds like analysis.

But mechanically, it’s pattern completion, not verification.

And AI writes so well, it’s always very convincing

Where hallucinations come from

Now you ask: “What was Apple’s ROIC in 2013?”

The model isn’t confident about the exact number.

It faces a choice:

Stop and say “I don’t know”

Generate a plausible-looking number

Often, it does the second.

Not to lie.

It just refuses to say ‘I don’t know.’ Like a lot of people we know

This is what we call hallucination:

Confident text that is not grounded in reality.

For investors, that’s dangerous.

Now here’s the good news:

AI isn’t useless.

You just need to know the 3 secrets that make hallucinations disappear.

Let’s start with the biggest one.

Secret #1: Use the Right Model

This is the single biggest lever.

Most people use AI in “chat mode” the default setting.

And that’s where 70% of hallucinations come from.

There are different ways to use AI.

Each one has a different impact on hallucinations.

Most people stay at Level 1 :

Level 1: Chat Mode (No Sources)

This is the default mode.

And the most dangerous.

How it works:

You type: “Explain Microsoft’s cloud growth drivers”

The AI immediately generates text based on:

Language patterns about “cloud growth”

Common narratives about Microsoft

What “usually comes next” in this type of writing

What it does NOT do:

Read Microsoft’s 10-K

Check earnings transcripts

Verify any facts

Result:

You get a plausible explanation that may be completely wrong.

Hallucination risk: High

Level 2: Chat Mode WITH Sources (You Upload Files)

This is better but still limited.

How it works:

You upload Microsoft’s 10-K and ask:

“Based on this report, explain why cloud revenue grew 25%”

Now the AI:

Ingests the document in chunks

Retrieves passages it judges relevant

Generates an answer conditioned on those retrieved passages

Important:

It does NOT read the entire document line by line.

It works on retrieved sections, not full coverage.

What improves:

The answer is guided by the source material, but not strictly limited to it

Guessing is reduced

Hallucinations are less frequent

The model can say “not mentioned” if information isn’t retrieved

What’s still a problem:

The model only reasons over documents and passages it actually retrieves

If a key section is missed, the analysis can be incomplete

The system may still:

Miss footnotes or edge cases

Misinterpret accounting language

Over-generalize from partial context

Hallucination risk: Medium

Level 3: Deep Research Mode (The Game-Changer)

This is the mode serious investors should use.

Deep Research is not just a button you click.

It’s a research agent that uses an LLM.

The simple way to think about it:

LLM → writes text

Deep Research → decides what to look for, finds it, then asks the LLM to explain

Deep Research = LLM + search + reading + planning + synthesis

It is not a smarter LLM.

It is an LLM coordinating other systems before it starts writing.